AI is a hot topic in and of itself — and when we combine it with games, there’s always a great topic to discuss: “In Game X, the AI engine has finally beaten the human champion.” Each clash between the human mind and machine intellect is a fascinating story of two very different gladiators pitted against each other — and the only thing they have in common is the need to win. As hype dies down, however, we cannot help but wonder: “Why are we spending so many resources to teach AI systems how to play games well?” After all, the computational power The answer: to teach them how to solve real-world problems.

Learning through play is a popular methodology for many species: just like kittens explore the limits of their physical prowess via rough-and-tumble play, we use games to simulate a situation where we need to solve a problem. In a game environment, the stress associated with problem-solving is alleviated, leaving more space for creativity. Although this psychological aspect doesn’t really translate to AI, the core mechanic still stands: a game is a testing ground for AI, designed to train its capabilities of tackling complex problems.

Recommended: Machine Learning – A Subset of Artificial Intelligence

The (Im)Perfect Opportunity

AI boasts a rich presence in games; throughout its history, it has been challenging players to try and defeat it. As computational power increased, AI engines finally got the opportunity to compete with top players: in chess, this stand-off culminated in the 1997 match between IBM’s Deep Blue and world champion Garry Kasparov — this was the first time the highest-ranking player would lose to a computer. Although chess (and similar games that AI conquered in the following decades — go and shogi) is in no way a “simple” game, its game mechanics allow the AI to just brute-force millions of calculations, guessing the opponent’s move — and this is far from the real intelligence we like to attribute to the AI.

Mastering chess was a major breakthrough, but an important caveat of this game is perfect information — players can see the actions of other players, which somewhat simplifies the decision-making process. The next milestone for AI advancement was operating within the boundaries of imperfect information, i.e. playing uninformed about the opponent’s actions. In these games, the AI cannot see its opponent most of the time and is presented with the additional challenge of analyzing what its opponent might do and adjusting their strategy according to these predictions.

To tackle the imperfect-information games, the teams behind today’s greatest AI engines, Google DeepMind and OpenAI, decided to try games with a lot of strategic depth: Dota 2 and Starcraft 2. In essence, both of these games challenge the player to outsmart their opponent by controlling their units more effectively (one unit in Dota 2 and dozens of units in Starcraft 2), but not knowing of the opponent’s actions unless the units come face to face. Moreover, there are many strategic elements which introduce even more complications:

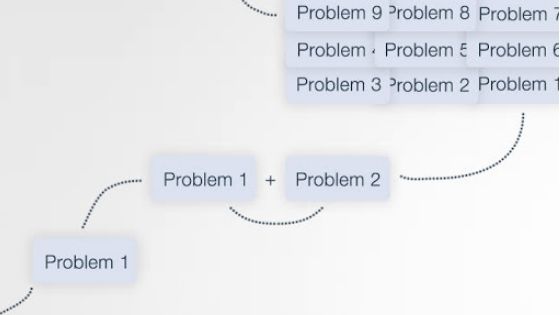

- Game theory: These games are balanced in a way that offers no single best strategy: A counters B, B counters C, and C counters A. Therefore, the AI should train as much as possible, refining and expanding its strategic knowledge.

- Long-term planning: As matches can take up to one hour, it is often not possible to see whether your actions have a positive or a negative effect. To analyze and predict what the outcome will be, AI requires much more memory and computational power.

- Real-time: Time restraints are put on the players, forcing them to react quickly as the match unfolds. This is in stark contrast to traditional board games where players get much more time to think and strategize.

- Large action space: Various units and structures must be controlled simultaneously, creating an endless combinatorial network of possibilities.

As the number of possible strategies is way too high, trying to simply calculate the best strategy via permutation is suboptimal — an experienced human player would compensate for slower reaction time with a better understanding of the game’s fundamentals. To solve this problem, Google’s Deepmind project team utilized reinforcement learning, essentially making the AI engine, AlphaStar, play millions of matches against itself. The result: AlphaStar learned the best practices and strategies and managed to beat the world’s best Starcraft 2 players, creating something akin to its own style of playing.

Recommended: Tips on Using A.I. (Artificial Intelligence) to Improve Your Business

Solving Real-World Problems

To paraphrase a popular phrase: “Life is a game… with imperfect information.” AlphaStar, having mastered one of such games, comes one step closer to helping us approach real-world problems. AlphaZero, Google’s AI chess engine, used reinforcement learning as well and actually reinvented some of the elements of chess strategy, showcasing that the strategies previously considered optimal were, in fact, suboptimal. This is the essence of the AI that teams at Google and Elon Musk-sponsored OpenAI are trying to create — something that can offer a different perspective and improve our lives.

Their efforts are already making amazing technological advancements possible: the DeepMind project, for instance, has expanded its areas of operation to health and business:

- In business, the Deepmind insights allowed for improved energy efficiency of Google’s data centers, optimized mobile performance on Android Pie operating system and enhanced Google Assistant and Google Cloud Platform.

- In health, this technology is used by the UK’s National Health Service to decrease the time it takes to get patients from test to treatment

In addition, AI systems help us approach other complex tasks like hiring and helping reduce employee turnover. In all of these areas, AI can dissect the given problem similar to how it analyzes games — these are the variables, these are the conditions, and this is the goal we’re aiming at. Although the AI is far from complete autonomy, it provides valuable insight into processes and phenomena we try to understand. Perhaps, presenting complex human relationships as a mere set of variables is an oversimplification, but as CPU and GPU power rises, the AI will be able to operate with more and more abstract concepts.

As Patrick Henry Winston, Professor of artificial intelligence and computer science at MIT, puts it: “Every time the computer does some narrow thing better than a person, it’s a temptation to think that it’s all over for us… it probably happened first when computers could multiply large numbers very fast. But what goes inside our heads is a form of exotic engineering that we just simply don’t understand yet.” Playing is a crucial element of our learning process — and we hope that letting AI play games will uncover something deep about us.

Author bio: Denis Kryukov is an author at Soshace, an online hiring platform that connects IT professionals and companies.